Don’t trust people, trust their components

I used to think that people were either trustworthy, semi-trustworthy, or unreliable. Basically, there was a single scale, and if you were high enough on that scale, you could be counted on when it came to anything important. My model wasn’t quite this simplistic – for instance, I did acknowledge that someone like my mother could be trustworthy for me but less motivated in helping out anyone else – but it wasn’t much more sophisticated, either.

And over time, I would notice that my model – which I’ll call the Essentialist Model, because it presumes that people are essentially good or bad – wasn’t quite right. People who had seemingly proven themselves to be maximally trustworthy would nonetheless sometimes let me down on very important things. Other people would also trust someone, and seemingly have every reason to do so, and then that someone would systematically violate the trust – but not in general, just when it came to some very specific things. Outside those specific exceptions, the people-being-trusted would still remain as reliable as before.

Clearly, the Essentialist Model was somehow broken.

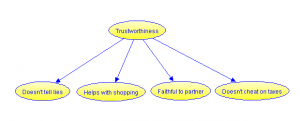

The Essentialist Model of Trust, with a person’s reliability in different situations being a function of their overall trustworthiness.

Sarah Constantin has written a fantastic essay called Errors vs. Bugs and the End of Stupidity, in which she discusses the way we think about people’s competence at something. I encourage you to read the whole thing, but to briefly summarize it, Sarah notes that we tend to often assume the Error Model, according to which people’s skill at something is equal to a perfect performance minus some error. If someone has a large error, they’re bad and will deliver a consistently bad performance, whereas someone with a small error will tend to only perform well.

You may notice a certain similarity between the Error Model of Competence and the Essentialist Model of Trust. Both assume a single, continuous skill for the thing being measured, be it competence or trustworthiness. Sarah contrasts the Error Model with the Bug Model, which looks at specific “bugs” that are the causes of errors in people’s performance. Maybe someone has misunderstood a basic concept, which is holding them back. If you correct such a bug, someone can suddenly go from getting everything wrong to getting everything right. Sarah suggests that instead of thinking abstractly about wanting to “get better” – either when trying to improve your own skills, or when teaching someone else – it is more useful to think in terms of locating and fixing specific bugs1.

I find that trust, too, is better understood in terms of something like the Bug Model. I will call my current model of trust the Component Model, for reasons which should hopefully become apparent in a moment.

Before I elaborate on it, we need to ask – just what does it mean if I say that I trust someone with regard to something? I think it basically means that I assume them to do something, like coming to my assistance when I need them, or I assume them to not do something, like spreading bad rumors about me. Furthermore, my thoughts and actions are shaped by this assumption. I neither take precautions against the possibility of them behaving differently, nor do I worry about needing to. So trusting someone, then, means that I expect them to behave in a particular, relatively specific way2.

Now we humans are made up of components, and it is those components that ultimately cause all of our behavior. By “components”, I mean things like desires, drives, beliefs, habits, memories, and so on. I also mean external circumstances like a person’s job, their social relationships, the weather wherever they live, and so forth. And finally, there are also the components that are formed by the interaction of the person’s environment and their inner dispositions – their happiness, the amount of stress they experience, their level of blood sugar at some moment, et cetera.

Behaviors are caused by all of these components interacting. Suppose that I’m sick and can’t go to the store myself, but I’m out of food, so I ask my friend to bring me something. Maybe it’s an important part of the identity of my friend to help his friends, so he agrees. He also happens to know where the store is, has the time himself to visit it, isn’t too exhausted to do any extra shopping, and so on. All of these things happen to go right, so he makes good on his promise.

Of course, this isn’t the only possible combination of things that would cause him to carry out the promise. Maybe helping friends isn’t particularly important for his identity, but he happens to like helping people in general. Or maybe he is terribly insecure and afraid of displeasing me, so he agrees even though he doesn’t really want to. If he fulfills his promise, I know that it was due to some combination of enabling factors. But the fulfillment of the promise alone isn’t enough to tell me what those enabling factors actually were. Similarly, if he promises to go but then doesn’t, then I know that something went wrong, but I don’t know what, exactly.

The Essentialist Model of trust takes none of this into account. It merely treats trustworthiness as a single variable, and updates its estimate of the person’s trustworthiness based on whether or not the promise was fulfilled. It may happen that I ask the same person to do something a lot of times, and the responses are always similar, so I get more confident about my Essentialist estimate. But all of the trials happen in relatively similar circumstances: each time, I happen to be activating a similar set of enabling factors. The circumstances, and the type of requests, and the way my friend looks at things, can all change – and all of those things can change the way my friend behaves when I next ask him to do something.

If I am instead using the Component Model, I can try to think about which components might have been the enabling factors this time around, and only increase my confidence in those components. Maybe my friend mentions that he always likes helping out his friends, in which case I can increase my strength of confidence in the component of “likes to help his friends”. Or maybe he looks nervous and jumpy when he brings me the food and lights up when I thank him, in which case it may be reasonable to assume that poor self-esteem was the cause. Of course, I may misread the signs and thus misattribute the cause of the behavior, so I should ideally spread the strength of my belief update over several different possible causes. But this is still a more targeted approach than assuming that this is evidence about his essential nature.

Now the important thing to realize is that, for someone to behave as I expect them to, then every component that’s required for fulfilling that behavior has to work as it should. Either that, or some alternate set of components that causes exactly the same behavior has to come in play. A person can spend a very long time in a situation where the circumstances conspire to keep the exact same sets of components active. But if someone ever gets into a situation where even a single component gets temporarily blocked, or if that component is overridden by some previously dormant component that now becomes active, they may suddenly become unreliable – at least, with regard to the particular behavior enabled by those specific components.

Various things might cause someone to renege on their promises or obligations. The person might…

- …be too exhausted.

- …prioritize a conflicting obligation they have towards someone else who needs their help more.

- …misjudge the situation and make a mistake.

- …want to refuse to do something, but be too afraid to say so.

- …genuinely forget having made a promise.

- …not realize that we expect them to do something in the first place.

- …act in such a heat of passion that the components creating that passion shut down every other component, in which case we might say that they “couldn’t help themselves” – though we might also hold that knowing their own poor self-control, they shouldn’t have gotten themselves in that situation in the first place.

- …never have intended to fulfill the promise in the first place, lying to gain personal advantage.

- …have no regard for other people, doing whatever benefits them personally the most

Some of these reasons we would often consider acceptable, others are more of a gray area, and some we consider as clearly worthy of moral blame no matter what.

That kind of a categorization does have value. It helps us assign moral blame and condemnation when it’s warranted. Some components also have more predictive value than others – if it turns out that someone has no regard for others, then they are probably unreliable in a lot of different situations. Somebody who had good intentions is more likely to succeed the next time, if the things that caused him to fail this time around are fixed.

But we should also notice that at heart, all of these are just situations where we might be disappointed if our model of other people’s behavior doesn’t take everything relevant into account. In each case, some set of components interacted to produce a behavior which wasn’t what we expected. That’s relevant information for predicting their behavior in other situations, to avoid getting disappointed again.

If we are thinking in terms of the Essentialist Model, we might be tempted to dismiss the morally acceptable situations as ones that “don’t count” for determining a person’s trustworthiness. We are then basically saying that if somebody had a good reason for doing or not doing something, then we should just forget about that and not hold it against that person.

But just forgetting about it means throwing away information. Maybe we should not count the breach of trust against the person, in moral terms, but we should still use it to update our model of them. Then we are less likely to become disappointed in the future.

Sarah mentions that when you start thinking in terms of the Bug Model of Competence, you stop thinking of people as “stupid”:

Tags like “stupid,” “bad at ____”, “sloppy,” and so on, are ways of saying “You’re performing badly and I don’t know why.” Once you move it to “you’re performing badly because you have the wrong fingerings,” or “you’re performing badly because you don’t understand what a limit is,” it’s no longer a vague personal failing but a causal necessity. Anyone who never understood limits will flunk calculus. It’s not you, it’s the bug.

This also applies to “lazy.” Lazy just means “you’re not meeting your obligations and I don’t know why.” If it turns out that you’ve been missing appointments because you don’t keep a calendar, then you’re not intrinsically “lazy,” you were just executing the wrong procedure. And suddenly you stop wanting to call the person “lazy” when it makes more sense to say they need organizational tools.

“Lazy” and “stupid” and “bad at ____” are terms about the map, not the territory. Once you understand what causes mistakes, those terms are far less informative than actually describing what’s happening.

A very similar thing happens when you start thinking in terms of the Component Model of Trust. People are no longer trustworthy or non-trustworthy: that’s not a very useful description. Rather they just have different behavioral patterns in different situations.

You also stop thinking about people as good or bad, or of someone either being nice or a jerk or something in between. To say that someone is a jerk for not holding his promises, or actively deceiving you, just means that they have some behavioral patterns that you should watch out for. If you can correctly predict the situations where the person does and doesn’t exhibit harmful behaviors, you can spend time with them in the safe situations and avoid counting on them in other situations. Of course, you might be overconfident in your predictions, so it can be worth applying some extra caution.

For the matter, the question of “can I trust my friend” also loses some of its character as a special question. “Can I trust my friend” becomes “will my friend exhibit this behavioral pattern in this situation”. As a question, that’s not that different from any other prediction about them, such as “would my friend appreciate this book if I bought it for them as a gift”. Questions of trust become just special cases of general predictions of someone’s behavior.

Some of my more cynical friends say that I tend to have a better opinion of people than they do. I’m not sure that that’s quite it. Rather, I have a more situational opinion of people: I’m sure that countless folks would be certain to stab me in the back in the right circumstances. I just think that those right circumstances come up relatively rarely, and that even people with a track record of unreliability can be safe to associate with, or rely on, if you make sure to avoid the situations where the unreliability comes to play.

Bruce Schneier sums this up nicely in Liars and Outliers:

I trust Alice to return a $10 loan but not a $10,000 loan, Bob to return a $10,000 loan but not to babysit an infant, Carol to babysit but not with my house key, Dave with my house key but not my intimate secrets, and Ellen with my intimate secrets but not to return a $10 loan. I trust Frank if a friend vouches for him, a taxi driver as long as he’s displaying his license, and Gail as long as she hasn’t been drinking.

On the other hand, to some extent the Component Model means that I trust people less. I don’t think that it’s possible to trust anyone completely. Complete trust would imply that there was no possible combination of component interactions that would cause someone to let me down. That’s neither plausible on theoretical grounds, nor supported by my experience with dealing with actual humans.

Rather, I trust a person to behave in a specific way to the extent that I’ve observed them exhibiting that behavior, to the extent that their current circumstances match the circumstances where I made the observation, and to the extent that I feel that my model of the components underlying their behavior is reliable. If I haven’t actually witnessed them exhibiting the behavior, I have to fall back on what my model of their components predicts, which makes things a lot more uncertain. In either case, if their personality and circumstances seem to match the personalities and circumstances of other people who I’ve previously observed to be reliable, that provides another source of evidence3.

That’s what you get with the Component Model: you end up trusting the “bad guys” more and the “good guys” less. And hopefully, this lets you get more out of life, since your beliefs now match reality better.

Footnotes

1: See also muflax’s hilarious but true post about learning languages.

2: Some may feel that my definition for trust is too broad. After all, “trust” often implies some kind of an agreement that is violated, and my definition of trust says nothing about whether the other person has promised to behave in any particular way. But most situations that involve trust do not involve an explicit promise. If I invite someone to my home, I don’t explicitly ask them to promise that they won’t steal anything, yet I trust their implicit promise that they won’t.

Now the problem with implicit promises is that you can never be entirely sure that all parties have understood them in the same way. I might tell my friend something, trusting them to keep it private, but they might be under the impression that they are free to talk about it with others. In such a case, there isn’t any “true” answer to whether or not there existed an implicit promise to keep quiet – just my impression that one did, and their impression that one did not.

Even if someone does make an explicit promise, they might not realize to mention that there are exceptions when they would break that promise, ones which they think are so obvious that they don’t even count those as breaking the promise in the first place. For example, I promise to meet my friend somewhere, but don’t explicitly mention that I’ll have to cancel in case my girlfriend gets into a serious accident around that exact time. Or maybe I tell my friend something and they promise to keep it private, but neglect to say that they may mention it to someone trustworthy if they think that that will get me out of worse trouble, and if they also think that I would approve of that.

Again, problems show up if people have a differing opinion of what counts as an “obvious” exception. And again there may not be any objective answer in these situations – just one person’s opinion that some exception was obvious, and another person’s opinion that it wasn’t at all obvious.

This isn’t to say that a truth could never be found. Sometimes everyone does agree that a promise was made and broken. Society also often decides that some kinds of actions should be understood as promises. For instance, the default is to assume that one will be sexually faithful towards their spouse unless there is an explicit agreement to the contrary. Even though it may not be an objective truth that violating this assumption means a violation of trust, socially we have agreed to act as if it did. Similarly, if someone thinks that a written contract has been broken, we let the courts decide on a socially-objective truth of whether there really was a breach of contract.

Still, it suffices for my point to establish that there exist many situations where we cannot find an objective truth, nor even a socially-objective truth, of whether someone really did make a promise which they then violated. We can only talk about people’s opinions of whether such a promise existed. And then we might as well only talk about how we expect people to act, dropping the promise component entirely.

3: Incidentally, the Component Model may suggest a partial reason for why we tend to like people who are similar to ourselves. According to the affect-as-information model, emotions and feelings contain information which summarizes the subconscious judgements of lower-level processes. So if a particular person feels like someone whose company we naturally enjoy, then that might be because some set of processes within our brains has determined that this is a trustworthy person. It makes sense to assume that we would naturally trust people similar to ourselves more than people dissimilar to ourselves: not only are they likely to share our values, but their similarity may make it easier for us to predict their behavior in different kinds of situations.

“I don’t think that it’s possible to trust anyone completely. Complete trust would imply that there was no possible combination of component interactions that would cause someone to let me down. That’s neither plausible on theoretical grounds, nor supported by my experience with dealing with actual humans.”

This made me think about “complete trust” in someone as an expression of love. If I know from experience that there is a significant chance that my partner will let me down, but I still count on him or her unconditionally, because I see that as essential for the special relationship that I have with him or her – do I then trust that person, or not trust that person? There seems to be a difference between “trusting” as an act, and “trusting” as a rational assessment.

My thought is: although I, too, think that complete trust in someone is never justified in rational terms, I wonder if it is essential for love relations that sometimes I just ignore rational thinking on that point.

Right. Decision theory tells us that if the probability of a disappointment times the cost of the disappointment is smaller than the cost of preparing for the disappointment, then the best action is to just ignore the possibility of the disappointment… in which case counting on someone would actually be the rational decision, since that gives a better expected payoff. In practice, we do this all the time, since it wouldn’t be a good idea to spend all of our time and resources preparing for low-probability threats.

My definition of trust conflated trust in the sense of assuming that someone may disappoint you (thought), and trust in the sense of preparing for that disappointment (action). That was somewhat deliberate, since it makes easier to talk about trust as sliding scale. With a very high trust, you don’t even waste time considering the probability of a disappointment, with a somewhat lower one you acknowledge its possibility but don’t do anything about it, and with a sufficiently low level of trust you start taking safeguards against it.

“I don’t think that it’s possible to trust anyone completely” is a natural consequence of the simple factual observation that you even can’t completely trust your own future self – there are some promises that your future self will keep, and a bunch of promises that you can make that your future self is very likely to violate. And trusting someone else’s future self has at least all the same issues, and a few more in addition.

I wouldn’t call it a natural consequence, since your future self isn’t necessarily the most trustworthy person you know.

The shift from the essentialist theory to the component theory is basically what happened in personality psychology (at least in a large segment of the field). Walter Mischel’s wikipedia page does a decent job of summarizing this shift: Mischel “suggested that consistency would be found in distinctive but stable patterns of if-then, situation-behavior relations that form contextualized, psychologically meaningful “personality signatures” (e.g., “she does A when X, but B when Y”).”

The essentialist model seems to me an occurrence of (or at least it is related to) the fundamental attribution bias. Instead of attributing people’s behavior to (pretty much random) external factors, you think of everything they do as a representative of their “true personality”.

Well, the defining behaviors of personality are not randomly expressed even if it is inaccurate to describe one’s default personality as “true” (does anyone use this term?). Personality is dynamic, it can develop through the lifespan, and it can adapt/maladapt to a limited extent, which might fool some people into thinking that known others are generally unpredictable in any given situation (I don’t know anyone who truly thinks this way), but there is no empirical evidence that I know of that concludes that behavior is solely a non- personality (non-patterned/unpredictable/random/ non “true”) response to random external factors. If behavior is not the result of decisions that are made through the cortical centers that work together to result in somewhat patterned reaction to external circumstance (ie: work together to produce “personality”) than what is it?

I have an issue with the term “random external factor”. What is a “random external factor”? Is it an external circumstance that the subject has not before encountered? Or is it an external circumstance that you have not before observed the subject encountering? Or is it merely an occurrence that wasn’t predicted but that the subject may or may not be familiar with? It would likely be more clear to state that you believe that “behavior is most often a random response to external factors”. If I interpret your reply correctly, that is what you were attempting to convey. No offense, I’m just attempting to clarify. Please correct me where I may be off.

Assuming that you have observed the subject encountering a “random external factor” before, then behavior can be somewhat predictable. If you have not but someone else has, the behavior can be predictable but maybe not by you. If the subject has not before encountered the circumstance, then behavior is not predictable but may be predictable the next time they encounter it assuming that you are aware of what they may have learned from any sub-optimal outcome. In any situation, I’m not sure how the possibility of behavioral, logical, and emotional patterns (personality) can be eschewed for an assumption that behavior is random.

Btw, Kaj, this is a great article. I have before had a lot of difficulty in rectifying my learned (innate?) sense of trust, and how I think this behavioral ideal and exchange should be upheld, with how people actually interact. The resultant lessons are important for people like me, but nonetheless are initially difficult and the common knee-jerk interaction-avoiding response doesn’t tend to confer any power in-return that isn’t born out of merely circumventing a bad result. The component model seems to be a great alternative to the A/B trust/no trust perspective that I had before resigned myself too, although I long ago also resigned myself to filtering information and my response through something akin to the component model whenever in a romantic relationship/interaction with the opposite sex. This was likely because I wanted the relationship to work in spite of any core human tendencies or flaws. The increased social flexibility will be appreciated. Thanks.

Anonymous, I was reading too fast as I often do, and misread your critique. You can disregard my response.

I agree with this descriptively, and have lived by it often.But prescriptively, a problem is that if your behavior *shows* not only limits on the trust you have in people “on your side”, but also trust (in some instances) in bad people [that even you might consider so], that can weaken community bonds – and you live in a society where few people but you consciously notice that; imagine if the attitude was common. Granted, if you already live fully atomized, that’s the only intelligent thing to do; otherwise, take care (and some dishonesty involved may be why the *older* thinking could be advantageous).